RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

1. DISCRETE RANDOM VARIABLES

1.1. Definition of a Discrete Random Variable. A random variable X is said to be discrete if it can

assume only a finite or countable infinite number of distinct values. A discrete random variable

can be defined on both a countable or uncountable sample space.

1.2. Probability for a discrete random variable. The probability that X takes on the value x, P(X=x),

is defined as the sum of the probabilities of all sample points in Ω that are assigned the value x. We

may denote P(X=x) by p(x) or p

X

(x). The expression p

X

(x) is a function that assigns probabilities

to each possible value x; thus it is often called the probability function for the random variable X.

1.3. Probability distribution for a discrete random variable. The probability distribution for a

discrete random variable X can be represented by a formula, a table, or a graph, which provides

p

X

(x) = P(X=x) for all x. The probability distribution for a discrete random variable assigns nonzero

probabilities to only a countable number of distinct x values. Any value x not explicitly assigned a

positive probability is understood to be such that P(X=x) = 0.

The function p

X

(x)= P(X=x) for each x within the range of X is called the probability distribution

of X. It is often called the probability mass function for the discrete random variable X.

1.4. Properties of the probability distribution for a discrete random variable. A function can

serve as the probability distribution for a discrete random variable X if and only if it s values,

p

X

(x), satisfy the conditions:

a: p

X

(x) ≥ 0 for each value within its domain

b:

P

x

p

X

(x)=1, where the summation extends over all the values within its domain

1.5. Examples of probability mass functions.

1.5.1. Example 1. Find a formula for the probability distribution of the total number of heads ob-

tained in four tosses of a balanced coin.

The sample space, probabilities and the value of the random variable are given in table 1.

From the table we can determine the probabilities as

P (X =0) =

1

16

,P(X =1) =

4

16

,P(X =2) =

6

16

,P(X =3) =

4

16

,P(X =4) =

1

16

(1)

Notice that the denominators of the five fractions are the same and the numerators of the five

fractions are 1, 4, 6, 4, 1. The numbers in the numerators is a set of binomial coefficients.

1

16

=

4

0

1

16

,

4

16

=

4

1

1

16

,

6

16

=

4

2

1

16

,

4

16

=

4

3

1

16

,

1

16

=

4

4

1

16

We can then write the probability mass function as

Date: November 1, 2005.

1

2 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

TABLE 1. Probability of a Function of the Number of Heads from Tossing a Coin

Four Times.

Table R.1

Tossing a Coin Four Times

Element of sample space Probability Value of random variable X (x)

HHHH 1/16 4

HHHT 1/16 3

HHTH 1/16 3

HTHH 1/16 3

THHH 1/16 3

HHTT 1/16 2

HTHT 1/16 2

HTTH 1/16 2

THHT 1/16 2

THTH 1/16 2

TTHH 1/16 2

HTTT 1/16 1

THTT 1/16 1

TTHT 1/16 1

TTTH 1/16 1

TTTT 1/16 0

p

X

(x)=

4

x

16

for x =0, 1 , 2 , 3 , 4 (2)

Note that all the probabilities are positive and that they sum to one.

1.5.2. Example 2. Roll a red die and a green die. Let the random variable be the larger of the two

numbers if they are different and the common value if they are the same. There are 36 points in

the sample space. In table 2 the outcomes are listed along with the value of the random variable

associated with each outcome.

The probability that X = 1, P(X=1) = P[(1, 1)] = 1/36. The probability that X = 2, P(X=2) = P[(1, 2),

(2,1), (2, 2)] = 3/36. Continuing we obtain

P (X =1) =

1

36

,P(X =2) =

3

36

,P(X =3) =

5

36

P (X =4) =

7

36

,P(X =5) =

9

36

,P(X =6) =

11

36

We can then write the probability mass function as

p

X

(x)=P (X = x)=

2 x − 1

36

for x =1, 2 , 3 , 4 , 5 , 6

Note that all the probabilities are positive and that they sum to one.

1.6. Cumulative Distribution Functions.

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 3

TABLE 2. Possible Outcomes of Rolling a Red Die and a Green Die – First Number

in Pair is Number on Red Die

Green (A) 1 2 3 4 5 6

Red (D)

1

11

1

12

2

13

3

14

4

15

5

16

6

2

21

2

22

2

23

3

24

4

25

5

26

6

3

31

3

32

3

33

3

34

4

35

5

36

6

4

41

4

42

4

43

4

44

4

45

5

46

6

5

51

5

52

5

53

5

54

5

55

5

56

6

6

61

6

62

6

63

6

64

6

65

6

66

6

1.6.1. Definition of a Cumulative Distribution Function. If X is a discrete random variable, the function

given by

F

X

(x)=P (x ≤ X)=

X

t ≤x

p(t) for −∞≤x ≤∞ (3)

where p(t) is the value of the probability distribution of X at t, is called the cumulative distribution

function of X. The function F

X

(x) is also called the distribution function of X.

1.6.2. Properties of a Cumulative Distribution Function. The values F

X

(X) of the distribution function

of a discrete random variable X satisfy the conditions

1: F(-∞) = 0 and F(∞) =1;

2: If a < b, then F(a) ≤ F(b) for any real numbers a and b

1.6.3. First example of a cumulative distribution function. Consider tossing a coin four times. The

possible outcomes are contained in table 1 and the values of p(·) in equation 2. From this we can

determine the cumulative distribution function as follows.

F (0) = (0) =

1

16

F (1) = (0) + p(1) =

1

16

+

4

16

=

5

16

F (2) = (0) + p(1) + p(2) =

1

16

+

4

16

+

6

16

=

11

16

F (3) = (0) + p(1) + p(2) + p(3) =

1

16

+

4

16

+

6

16

+

4

6

=

15

16

F (4) = p(0) + p(1) + p(2) + p(3) + p(4) =

1

16

+

4

16

+

6

16

+

4

6

+

1

16

=

16

16

We can write this in an alternative fashion as

4 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

F

X

(x)=

0 for x < 0

1

16

for 0 ≤ x<1

5

16

for 1 ≤ x<2

11

16

for 2 ≤ x<3

15

16

for 3 ≤ x<4

1 for x ≥ 4

1.6.4. Second example of a cumulative distribution function. Consider a group of N individuals, M of

whom are female. Then N-M are male. Now pick n individuals from this population without

replacement. Let x be the number of females chosen. There are

M

x

ways of choosing x females

from the M in the population and

N − M

n −x

ways of choosing n-x of the N - M males. Therefore,

there are

M

x

×

N − M

n −x

ways of choosing x females and n-x males. Because there are

N

n

ways of

choosing n of the N elements in the set, and because we will assume that they all are equally likely

the probability of x females in a sample of size n is given by

p

X

(x)=P (X = x)=

M

x

N − M

n −x

N

n

for x =0, 1 , 2 , 3 , ···,n

and x ≤ M, and n − x ≤ N − M.

(4)

For this discrete distribution we compute the cumulative density by adding up the appropriate

terms of the probability mass function.

F (0) = p(0)

F (1) = p(0) + p(1)

F (2) = p(0) + p(1) + p(2)

F (3) = p(0) + p(1) + p(2) + px(3)

.

.

.

F (n)=p(0) + p(1) + p(2) + p(3) + ··· + p(n)

(5)

Consider a population with four individuals, three of whom are female, denoted respectively

by A, B, C, D where A is a male and the others are females. Then consider drawing two from this

population. Based on equation 4 there should be

4

2

= 6 elements in the sample space. The sample

space is given by

TABLE 3. Drawing Two Individuals from a Population of Four where Order

Does Not Matter (no replacement)

Element of sample space Probability Value of random variable X

AB 1/6 1

AC 1/6 1

AD 1/6 1

BC 1/6 2

BD 1/6 2

CD 1/6 2

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 5

We can see that the probability of 2 females is

1

2

. We can also obtain this using the formula as

follows.

p(2) = P (X =2)=

3

2

1

0

4

2

=

(3)(1)

6

=

1

2

(6)

Similarly

p(1) = P (X =1)=

3

1

1

1

4

2

=

(3)(1)

6

=

1

2

(7)

We cannot use the formula to compute f(0) because (2 - 0) 6≤ (4 - 3). f(0) is then equal to 0. We can

then compute the cumulative distribution function as

F (0) = p(0) = 0

F (1) = p(0) + p(1) =

1

2

F (2) = p(0) + p(1) + p(2) = 1

(8)

1.7. Expected value.

1.7.1. Definition of expected value. Let X be a discrete random variable with probability function

p

X

(x). Then the expected value of X, E(X), is defined to be

E(X)=

X

x

xp

X

(x) (9)

if it exists. The expected value exists if

X

x

|x | p

X

(x) < ∞ (10)

The expected value is kind of a weighted average. It is also sometimes referred to as the popu-

lation mean of the random variable and denoted µ

X

.

1.7.2. First example computing an expected value. Toss a die that has six sides. Observe the number

that comes up. The probability mass or frequency function is given by

p

X

(x)=P (X = x)=

(

1

6

for x =1, 2, 3, 4, 5, 6

0 otherwise

(11)

We compute the expected value as

E(X)=

X

xX

xp

X

(x)

=

6

X

i =1

i

1

6

=

1+2+3+4+5+6

6

=

21

6

=3

1

2

(12)

6 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

1.7.3. Second example computing an expected value. Consider a group of 12 television sets, two of

which have white cords and ten which have black cords. Suppose three of them are chosen at ran-

dom and shipped to a care center. What are the probabilities that zero, one, or two of the sets with

white cords are shipped? What is the expected number with white cords that will be shipped?

It is clear that x of the two sets with white cords and 3-x of the ten sets with black cords can be

chosen in

2

x

×

10

3−x

ways. The three sets can be chosen in

12

3

ways. So we have a probability

mass function as follows.

p

X

(x)=P (X = x)=

2

x

10

3−x

12

3

for x =0, 1 , 2 (13)

For example

p(0) = P (X =0)=

2

0

10

3

−0

12

3

=

(1) (120)

220

=

6

11

(14)

We collect this information as in table 4.

TABLE 4. Probabilities for Television Problem

x 0 1 2

p

X

(x) 6/11 9/22 1/22

F

X

(x) 6/11 21/22 1

We compute the expected value as

E(X)=

X

xX

xp

X

(x)

= (0)

6

11

+ (1)

9

22

+ (2)

1

22

=

11

22

=

1

2

(15)

1.8. Expected value of a function of a random variable.

Theorem 1. Let X be a discrete random variable with probability mass function p

X

(x) and g(X) be a real-

valued function of X. Then the expected value of g(X) is given by

E[g(X)] =

X

x

g(x) p

X

(x) . (16)

Proof for case of finite values of X. Consider the case where the random variable X takes on a finite

number of values x

1

,x

2

,x

3

, ···x

n

. The function g(x) may not be one-to-one (the different values

of x

i

may yield the same value of g(x

i

). Suppose that g(X) takes on m different values (m ≤ n). It

follows that g(X) is also a random variable with possible values g

1

,g

2

,g

3

,...g

m

and probability

distribution

P [g (X)=g

i

]=

X

∀x

j

such that

g(x

j

)=g

i

p(x

j

)=p

∗

(g

i

) (17)

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 7

for all i = 1, 2, . . . m. Here p

∗

(g

i

) is the probability that the experiment results in a value for the

function f of the initial random variable of g

i

. Using the definition of expected value in equation

we obtain

E[g(X)] =

m

X

i

=1

g

i

p

∗

(g

i

). (18)

Now substitute in to obtain

E[g(X)] =

m

X

i =1

g

i

p

∗

(g

i

) .

=

m

X

i =1

g

i

X

∀x

j

3

g ( x

j

)=g

i

p ( x

j

)

=

m

X

i =1

X

∀x

j

3

g ( x

j

)=g

i

g

i

p ( x

j

)

=

n

X

j =1

g (x

j

) p( x

j

).

(19)

1.9. Properties of mathematical expectation.

1.9.1. Constants.

Theorem 2. Let X be a discrete random variable with probability function p

X

(x) and c be a constant. Then

E(c) = c.

Proof. Consider the function g(X) = c. Then by theorem 1

E[c] ≡

X

x

cp

X

(x)=c

X

x

p

X

(x) (20)

But by property 1.4b, we have

X

x

p

X

(x)=1

and hence

E (c)=c · (1) = c. (21)

8 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

1.9.2. Constants multiplied by functions of random variables.

Theorem 3. Let X be a discrete random variable with probability function p

X

(x), g(X) be a function of X,

and let c be a constant. Then

E [ cg( X )] ≡ cE[(g ( X )] (22)

Proof. By theorem 1 we have

E[cg(X)] ≡

X

x

cg(x) p

X

(x)

= c

X

x

g(x) p

X

(x)

= cE[g(X)]

(23)

1.9.3. Sums of functions of random variables.

Theorem 4. Let X be a discrete random variable with probability function p

X

(x), g

1

(X) ,g

2

(X) ,g

3

(X) , ···,g

k

(X)

be k functions of X. Then

E [g

1

(X)+g

2

(X)+g

3

(X)+···+ g

k

(X)] ≡ E[g

1

(X)] + E[g

2

(X)] + ···+ E[g

k

(X)] (24)

Proof for the case of k = 2. By theorem 1 we have we have

E [g

1

(X)+g

2

(X)] ≡

X

x

[g

1

(x)+g

2

(x)] p

X

(x)

≡

X

x

g

1

(x) p

X

(x)+

X

x

g

2

(x) p

X

(x)

= E [g

1

(X)] + E [ g

2

(X)] ,

(25)

1.10. Variance of a random variable.

1.10.1. Definition of variance. The variance of a random variable X is defined to be the expected

value of (X − µ)

2

. That is

V (X)=E

( X − µ )

2

(26)

The standard deviation of X is the positive square root of V(X).

1.10.2. Example 1. Consider a random variable with the following probability distribution.

TABLE 5. Probability Distribution for X

x p

X

(x)

0 1/8

1 1/4

2 3/8

3 1/4

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 9

We can compute the expected value as

µ = E(X)=

3

X

x =0

xp

X

(x)

= (0)

1

8

+ (1)

1

4

+ (2)

3

8

+ (3)

1

4

=1

3

4

(27)

We compute the variance as

σ

2

= E[X − µ)

2

]=Σ

3

x =0

(x − µ)

2

p

X

(x)

=(0 − 1.75)

2

1

8

+(1− 1.75)

2

1

4

+(2− 1.75)

2

3

8

+(3− 1.75)

2

1

4

= .9375

and the standard deviation as

σ

2

=0.9375

σ =+

√

σ

2

=

√

.9375 = 0.97.

1.10.3. Alternative formula for the variance.

Theorem 5. Let X be a discrete random variable with probability function p

X

(x); then

V (X) ≡ σ

2

= E

(X − µ )

2

= E

X

2

− µ

2

(28)

Proof. First write out the first part of equation 28 as follows

V (X) ≡ σ

2

= E

(X − µ )

2

= E

X

2

− 2 µX + µ

2

= E

X

2

− E (2 µX)+E

µ

2

(29)

where the last step follows from theorem 4. Note that µ is a constant, then apply theorems 3 and

2 to the second and third terms in equation 28 to obtain

V (X) ≡ σ

2

= E

( X − µ )

2

= E

X

2

− 2 µE(X)+ µ

2

(30)

Then making the substitution that E(X) = µ, we obtain

V (X) ≡ σ

2

= E

X

2

− µ

2

(31)

1.10.4. Example 2. Die toss.

Toss a die that has six sides. Observe the number that comes up. The probability mass or fre-

quency function is given by

p

X

(x)=P (X = x)=

(

1

6

for x =1, 2, 3, 4, 5, 6

0 otherwise

. (32)

We compute the expected value as

10 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

E(X)=

X

xX

xp

X

(x)

=

6

X

i

=1

i

1

6

=

1+2+3+4+5+6

6

=

21

6

=3

1

2

(33)

We compute the variance by then computing the E(X

2

) as follows

E(X

2

)=

X

xX

x

2

p

X

(x)

=

6

X

i =1

i

2

1

6

=

1+4+9+16+2+36

6

=

91

6

=15

1

6

(34)

We can then compute the variance using the formula Var(X) = E(X

2

)-E

2

(X) and the fact the E(X)

= 21/6 from equation 33.

Var( X)=E (X

2

) − E

2

(X)

=

91

6

−

21

6

2

=

91

6

−

441

36

=

546

36

−

441

36

=

105

36

=

35

12

=2.9166

(35)

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 11

2. THE ”DISTR IBUTION” OF RANDOM VARIABLES IN GENERAL

2.1. Cumulative distribution function. The cumulative distribution function (cdf) of a random

variable X, denoted by F

X

(·), is defined to be the function with domain the real line and range the

interval [0,1], which satisfies F

X

(x)=P

X

[X ≤ x] = P [ {ω : X(ω) ≤ x }] for every real number x.

F has the following properties:

F

X

(−∞) = lim

x→−∞

F

X

(x)=0,F

X

(+∞) = lim

x→+∞

F

X

(x)=1, (36a)

F

X

(a) ≤ F

X

(b) for a < b, nondecreasing function of x, (36b)

lim

0<h→0

F

X

(x + h)=F

X

(x), continuous from the right, (36c)

2.2. Example of a cumulative distribution function. Consider the following function

F

X

(x)=

1

1+e

−x

(37)

Check condition 36a as follows.

lim

x→−∞

F

X

(x) = lim

x→−∞

1

1+e

−x

= lim

x→∞

1

1+e

x

=0

lim

x→∞

F

X

(x) = lim

x→∞

1

1+e

−x

=1

(38)

To check condition 36b differentiate the cdf as follows

dF

X

( x )

dx

=

d

1

1+e

−x

dx

=

e

−x

(1 + e

−x

)

2

> 0

(39)

Condition 36c is satisfied because F

X

(x) is a continuous function.

2.3. Discrete and continuous random variables.

2.3.1. Discrete random variable. A random variable X will be said to be discrete if the range of X is

countable, that is if it can assume only a finite or countably infinite number of values. Alternatively,

a random variable is discrete if F

X

(x) is a step function of x.

2.3.2. Continuous random variable. A random variable X is continuous if F

X

(x) is a continuous func-

tion of x.

2.4. Frequency (probability mass) function of a discrete random variable.

2.4.1. Definition of a frequency (discrete density) function. If X is a discrete random variable with the

distinct values, x

1

,x

2

, ···,x

n

, ···, then the function denoted by p(·) and defined by

p

X

(x)=

(

P [X = x

j

] x = x

j

,j=1, 2 , ... , n, ...

0 x 6= x

j

(40)

is defined to be the frequency, discrete density, or probability mass function of X. We will often

write f

X

(x) for p

X

(x) to denote frequency as compared to probability.

A discrete probability distribution on R

k

is a probability measure P such that

12 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

∞

X

i =1

P ({x

i

})=1 (41)

for some sequence of points in R

k

, i.e. the sequence of points that occur as an outcome of the

experiment. Given the definition of the frequency function in equation 40, we can also say that any

non-negative function p on R

k

that vanishes except on a sequence x

1

,x

2

, ···,x

n

, ··· of vectors and

that satisfies

∞

X

i =1

p(x

i

)=1

defines a unique probability distribution by the relation

P ( A )=

X

x

i

A

p ( x

i

) (42)

2.4.2. Properties of discrete density functions. As defined in section 1.4, a probability mass function

must satisfy

p

X

(x

j

) > 0, for j =1, 2, ... (43a)

p

X

(x)=0, for x 6= x

j

; j =1, 2, ..., (43b)

X

j

p

X

(x)

j

=1 (43c)

2.4.3. Example 1 of a discrete density function. Consider a probability model where there are two

possible outcomes to a single action (say heads and tails) and consider repeating this action several

times until one of the outcomes occurs. Let the random variable be the number of actions required

to obtain a particular outcome (say heads). Let p be the probability that outcome is a head and (1-p)

the probability of a tail. Then to obtain the first head on the x

th

toss, we need to have a tail on the

previous x-1 tosses. So the probability of the first had occurring on the x

th

toss is given by

p

X

(x)=P (X = x)=

(

(1 − p)

x − 1

pforx=1, 2 , ...

0 otherwise

(44)

For example the probability that it takes 4 tosses to get a head is 1/16 while the probability it

takes 2 tosses is 1/4.

2.4.4. Example 2 of a discrete density function. Consider tossing a die. The sample space is {1, 2, 3, 4,

5, 6}. The elements are {1}, {2}, ... . The frequency function is given by

p

(

x)=P (X = x)=

(

1

6

for x =1, 2, 3, 4, 5, 6

0 otherwise

. (45)

The density function is represented in figure 1.

2.5. Probability density function of a continuous random variable.

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 13

FIGURE 1. Frequency Function for Tossing a Die

1 2 3 4

5

6

7

8 9

x

1

6

1

3

1

2

2

3

5

6

1

fHxL

2.5.1. Alternative definition of continuous random variable. In section 2.3.2, we defined a random vari-

able to be continuous if F

X

(x) is a continuous function of x. We also say that a random variable X

is continuous if there exists a function f(·) such that

F

X

(x)=

Z

x

−∞

f(u) du (46)

for every real number x. The integral in equation 46 is a Riemann integral evaluated from -∞ to

a real number x.

2.5.2. Definition of a probability density frequency function (pdf). The probability density function,

f

X

(x), of a continuous random variable X is the function f(·) that satisfies

F

X

(x)=

Z

x

−∞

f

X

(u) du (47)

2.5.3. Properties of continuous density functions.

f

X

(x) ≥ 0 ∀x (48a)

Z

∞

−∞

f

X

(x) dx =1, (48b)

Analogous to equation 42, we can write in the continuous case

P (XA)=

Z

A

f

X

(x) dx (49)

14 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

where the integral is interpreted in the sense of Lebesgue.

Theorem 6. For a density function f

X

(x) defined over the set of all real numbers the following holds

P (a ≤ X ≤ b)=

Z

b

a

f

X

(x) dx (50)

for any real constants a and b with a ≤ b.

Also note that for a continuous random variable X the following are equivalent

P (a ≤ X ≤ b)=P (a ≤ X<b)=P ( a<X≤ b)=P (a<X<b) (51)

Note that we can obtain the various probabilities by integrating the area under the density func-

tion as seen in figure 2.

FIGURE 2. Area under the Density Function as Probability

fHxL

2.5.4. Example 1 of a continuous density function. Consider the following function

f

X

(x)=

(

k · e

− 3 x

for x > 0

0 elsewhere

. (52)

First we must find the value of k that makes this a valid density function. Given the condition

in equation 48b we must have that

Z

∞

−∞

f

X

(x) dx =

Z

∞

0

k · e

− 3 x

dx =1 (53)

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 15

Integrate the second term to obtain

Z

∞

0

k · e

− 3 x

dx = k · lim

t →∞

e

− 3 x

−3

|

t

0

=

k

3

(54)

Given that this must be equal to one we obtain

k

3

=1

⇒ k =3

(55)

The density is then given by

f

X

(x)=

(

3 · e

−3 x

for x > 0

0 elsewhere

. (56)

Now find the probability that (1 ≤ X ≤ 2).

P (1 ≤ X ≤ 2) =

Z

2

1

3 · e

−

3 x

dx

= − e

− 3 x

|

2

1

= − e

− 6

+ e

− 3

= − 0.00247875 + 0.049787

=0.047308

(57)

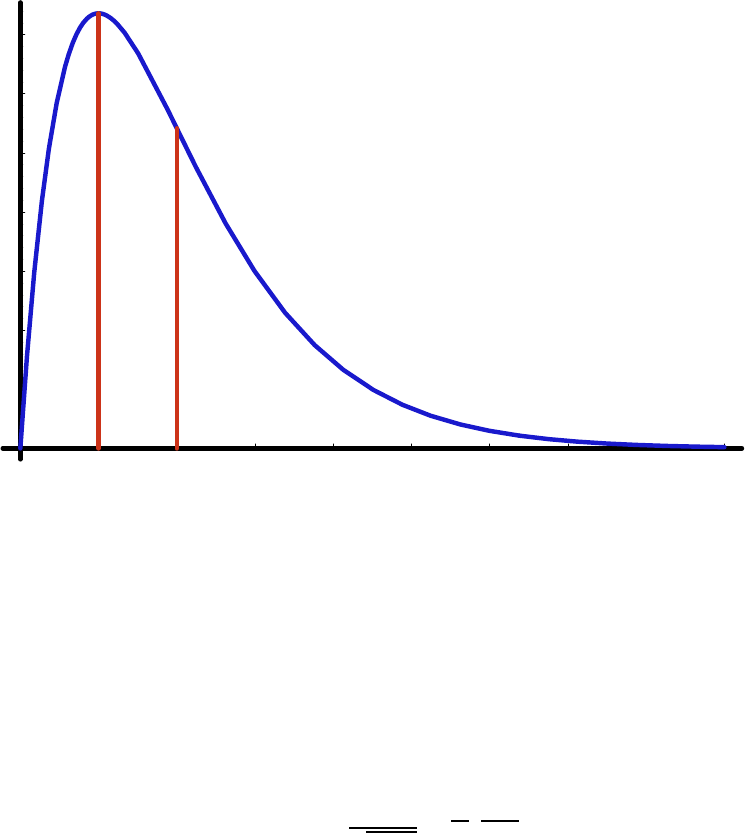

2.5.5. Example 2 of a continuous density function. Let X have p.d.f.

f

X

(x)=

(

x · e

− x

for 0 ≤ x ≤∞

0 elsewhere

. (58)

This density function is shown in figure 3.

We can find the probability that (1 ≤ X ≤ 2) by integration

P (1 ≤ X ≤ 2) =

Z

2

1

x · e

−x

dx (59)

First integrate the expression on the right by parts letting u = x and dv = e

−x

dx. Then du = dx

and v = - e

−x

dx. We then have

P (1 ≤ X ≤ 2) = − xe

− x

|

2

1

−

Z

2

1

−e

− x

dx

= − 2 e

− 2

+ e

− 1

−

e

− x

|

2

1

= − 2 e

− 2

+ e

− 1

− e

− 2

+ e

− 1

= − 3 e

− 2

+2e

− 1

=

−3

e

2

+

2

e

= − 0.406 + 0.73575

=0.32975

(60)

16 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

FIGURE 3. Graph of Density Function xe

−x

2 4 6 8

x

0.05

0.1

0.15

0.2

0.25

0.3

0.35

fHxL

This is represented by the area between the lines in figure 4.

We can also find the distribution function in this case.

F

X

(x)=

Z

x

0

t · e

−t

dt (61)

Make the u dv substitution as before to obtain

F

X

(x)=− te

−t

|

x

0

−

Z

x

0

−e

− t

dt

= − te

−t

|

x

0

− e

− t

|

x

0

= e

− t

(−1 − t)|

x

0

= e

− x

(−1 − x) − e

− 0

( −1 − 0)

= e

− x

(−1 − x)+1

=1 − e

− x

(1 + x)

(62)

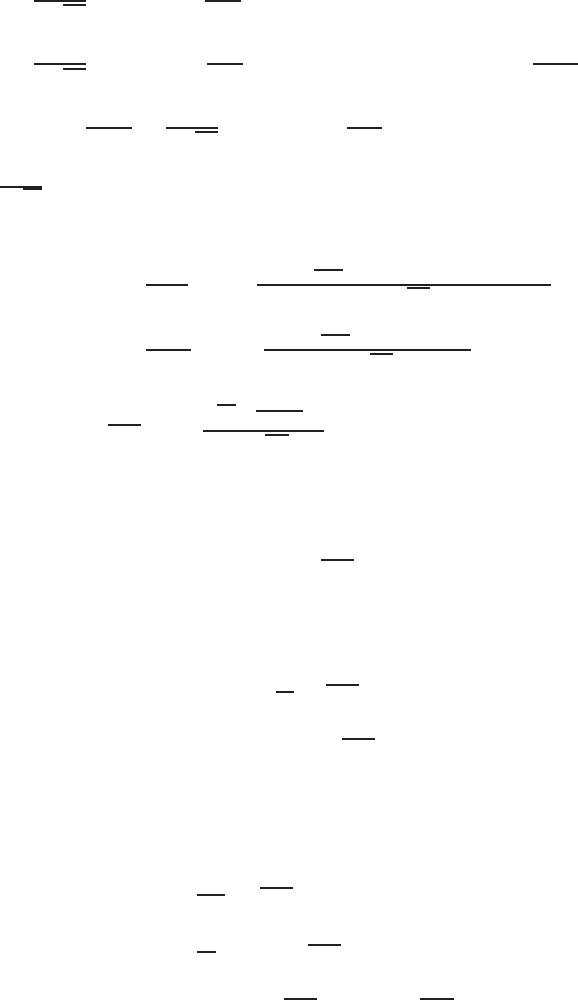

The distribution function is shown in figure 5.

Now consider the probability that (1 ≤ X ≤ 2)

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 17

FIGURE 4. P (1 ≤ X ≤ 2)

1 2 3 4 5 6 7 8 9

x

0.05

0.1

0.15

0.2

0.25

0.3

0.35

fHxL

P (1 ≤ X ≤ 2) = F (2) − F (1)

=1 − e

− 2

(1 + 2) − 1+e

− 1

(1 + 1)

=2e

− 1

− 3 e

− 2

=0.73575 − 0.406

=0.32975

(63)

We can see this as the difference in the values of F

X

(x) at 1 and at 2 in figure 6

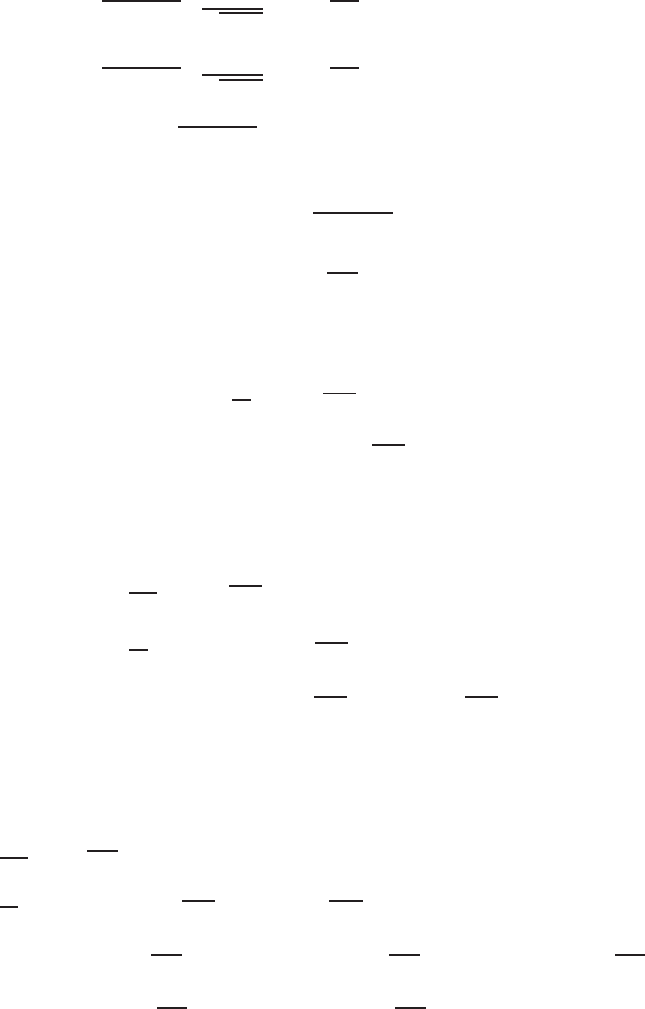

2.5.6. Example 3 of a continuous density function. Consider the normal density function given by

f( x : µ, σ )=

1

√

2 πσ

2

· e

−1

2

(

x − µ

σ

)

2

(64)

where µ and σ are parameters of the function. The shape and location of the density function

depends on the parameters µ and σ. In figure 7 the diagram the density is drawn for µ = 0, and σ =

1 and σ =2.

2.5.7. Example 4 of a continuous density function. Consider a random variable with density function

given by

f

X

(x)=

(

(p +1)x

p

0 ≤ x ≤ 1

0 otherwise

(65)

18 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

FIGURE 5. Graph of Distribution Function of Density Function xe

−x

1 2 3 4 5 6 7

x

0.2

0.4

0.6

0.8

1

fHxL

where p is greater than -1. For example, if p = 0, then f

X

(x) = 1, if p = 1, then f

X

(x) = 2x and so

on. The density function with p = 2 is shown in figure 8. The distribution function with p = 2 is

shown in figure 9.

2.6. Expected value.

2.6.1. Expectation of a single random variable. Let X be a random variable with density f

X

(x). The

expected value of the random variable, denoted E(X), is defined to be

E(X)=

R

∞

−∞

xf

X

(x) dx if X is continuous

P

xX

xp

X

(x) if X is discrete

. (66)

provided the sum or integral is defined. The expected value is kind of a weighted average. It is

also sometimes referred to as the population mean of the random variable and denoted µ

X

.

2.6.2. Expectation of a function of a single random variable. Let X be a random variable with density

f

X

(X). The expected value of a function g(·) of the random variable, denoted E(g(X)), is defined to

be

E(g(X)) =

Z

∞

−∞

g(x) f (x)dx (67)

if the integral is defined.

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 19

FIGURE 6. P (1 ≤ X ≤ 2) using the Distribution Function

1 2 3 4 5 6 7

x

0.2

0.4

0.6

0.8

1

fHxL

The expectation of a random variable can also be defined using the Riemann-Stieltjes integral

where F is a monotonically increasing function of X. Specifically

E(X)=

Z

∞

−∞

xdF(x)=

Z

∞

−∞

xdF (68)

2.7. Properties of expectation.

2.7.1. Constants.

E[a] ≡

Z

∞

−∞

af

X

(x)dx

≡ a

Z

∞

−∞

f

X

(x)dx

≡ a

(69)

2.7.2. Constants multiplied by a random variable.

E[aX] ≡

Z

∞

−∞

axf

X

(x)dx

≡ a

Z

∞

−∞

xf

X

(x)dx

≡ aE[X]

(70)

20 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

FIGURE 7. Normal Density Function

- 4 - 2 2 4

x

0.1

0.2

0.3

0.4

fHxL

2.7.3. Constants multiplied by a function of a random variable.

E[ag(X)] ≡

Z

∞

−∞

ag(x) f

X

(x)dx

≡ a

Z

∞

−∞

g(x) f

X

(x)dx

≡ aE[g(X)]

(71)

2.7.4. Sums of expected values. Let X be a continuous random variable with density function f

X

(x)

and let g

1

(X) ,g

2

(X) ,g

3

(X) , ···,g

k

(X) be k functions of X. Also let c

1

,c

2

,c

3

, ···c

k

be k constants.

Then

E [c

1

g

1

(X)+c

2

g

2

(X)+···+ c

k

g

k

(X)] ≡ E [c

1

g

1

(X)] + E [c

2

g

2

(X)] + ··· + E [c

k

g

k

(X)] (72)

2.8. Example 1. Consider the density function

f

X

(x)=

(

(p +1)x

p

0 ≤ x ≤ 1

0 otherwise

(73)

where p is greater than -1. We can compute the E(X) as follows.

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 21

FIGURE 8. Density Function ( p +1)x

p

0.2 0.4 0.6 0.8 1

x

0.5

1

1.5

2

2.5

3

fHxL

E(X)=

Z

∞

−∞

xf

X

(x)dx

=

Z

1

0

x(p +1)x

p

dx

=

Z

1

0

x

(p+1)

(p +1)dx

=

x

(p+2)

(p +1)

(p +2)

1

0

=

p +1

p +2

(74)

2.9. Example 2. Consider the exponential distribution which has density function

f

X

(x)=

1

λ

e

−x

λ

0 ≤ x ≤∞,λ > 0 (75)

We can compute the E(X) as follows.

22 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

FIGURE 9. Density Function (p =1)x

p

0.2 0.4 0.6 0.8 1

x

0.2

0.4

0.6

0.8

1

FHxL

E(X)=

Z

∞

0

x

1

λ

e

−x

λ

dx

= −xe

−x

λ

|

∞

0

+

Z

∞

0

e

−x

λ

dx

u =

x

λ

,du =

1

λ

dx, v = −λe

−x

λ

,dv = e

−x

λ

dx

=0 +

Z

∞

0

e

−x

λ

dx

= − λe

−x

λ

|

∞

0

= λ

(76)

2.10. Variance.

2.10.1. Definition of variance. The variance of a single random variable X with mean µ is given by

Var( X) ≡ σ

2

≡ E

h

(X − E(X))

2

i

≡ E

h

( X − µ)

2

i

≡

Z

∞

−∞

(x − µ)

2

f

X

(x)dx

(77)

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 23

We can write this in a different fashion by expanding the last term in equation 77.

Var(X) ≡

Z

∞

−∞

(x − µ)

2

f

X

(x)dx

≡

Z

∞

−∞

(x

2

− 2 µx + µ

2

) f

X

(x)dx

≡

Z

∞

−∞

x

2

f

X

(x) dx − 2 µ

Z

∞

−∞

xf

X

(x) dx + µ

2

Z

∞

−∞

f

X

(x) dx

= E

X

2

− 2 µE [X]+µ

2

= E

X

2

− 2 µ

2

+ µ

2

= E

X

2

− µ

2

≡

Z

∞

−∞

x

2

f

X

(x)dx −

Z

∞

−∞

xf

X

(x)dx

2

(78)

The variance is a measure of the dispersion of the random variable about the mean.

2.10.2. Variance example 1. Consider the density function

f

X

(x)=

(

(p +1)x

p

0 ≤ x ≤ 1

0 otherwise

(79)

where p is greater than -1. We can compute the Var(X) as follows.

E(X)=

Z

∞

−∞

xf

X

(x)dx

=

Z

1

0

x(p +1)x

p

dx

=

x

(p+2)

(p +1)

(p +2)

|

1

0

=

p +1

p +2

E(X

2

)=

Z

1

0

x

2

(p +1)x

p

dx

=

x

(p +3)

(p +1)

(p +3)

|

1

0

=

p +1

p +3

Var( X )=E (X

2

) − E

2

( X )

=

p +1

p +3

−

p +1

p +2

2

=

p +1

(p +2)

2

(p +3)

(80)

The values of the mean and variances for various values of p are given in table 6.

24 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

TABLE 6. Mean and Variance for Distribution f

X

(x)=(p +1)x

p

for alternative

values of p

p -.5 0 1 2 ∞

E(x) 0.333 0.5 0.66667 0.75 1

Var(x) 0.08888 0.833333 0.277778 0.00047 0

2.10.3. Variance example 2. Consider the exponential distribution which has density function

f

X

(x)=

1

λ

e

−x

λ

0 ≤ x ≤∞,λ >0 (81)

We can compute the E(X

2

) as follows

E(X

2

)=

Z

∞

0

x

2

1

λ

e

−x

λ

dx

= −x

2

e

−x

λ

|

∞

0

+2

Z

∞

0

xe

−x

λ

dx

u =

x

2

λ

,du=

2 x

λ

dx, v = −λe

−x

λ

,dv = e

−x

λ

dx

=0+2

Z

∞

0

xe

−x

λ

dx

= −2 λxe

−x

λ

|

∞

0

+2

Z

∞

0

λe

−x

λ

dx

u =2x, du=2dx, v = −λe

−x

λ

,dv = e

−x

λ

dx

=0 + 2λ

Z

∞

0

e

−x

λ

dx

=(2λ)

−λe

−x

λ

|

∞

0

=(2λ)(λ )

=2λ

2

(82)

We can then compute the variance as

Var( X)=E (X

2

) − E

2

(X)

=2λ

2

− λ

2

= λ

2

(83)

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 25

3. MOMENTS AND MOMENT GENERATING FUNCTIONS

3.1. Moments.

3.1.1. Moments about the origin (raw moments). The r

th

moment about the origin of a random vari-

able X, denoted by µ

0

r

, is the expected value of X

r

; symbolically,

µ

0

r

= E(X

r

)

=

X

x

x

r

f

X

(x)

(84)

for r = 0, 1, 2, . . . when X is discrete and

µ

0

r

= E( X

r

)

=

Z

∞

−∞

x

r

f

X

(x) dx

(85)

when X is continuous. The r

th

moment about the origin is only defined if E[X

r

] exists. A

moment about the origin is sometimes called a raw moment. Note that µ

0

1

= E(X)=µ

X

, the

mean of the distribution of X, or simply the mean of X. The r

th

moment is sometimes written as a

function of θ where θ is a vector of parameters that characterize the distribution of X.

3.1.2. Central moments. The r

th

moment about the mean of a random variable X, denoted by µ

r

,is

the expected value of (X − µ

X

)

r

symbolically,

µ

r

= E[(X − µ

X

)

r

]

=

X

x

(x − µ

X

)

r

f

X

(x)

(86)

for r = 0, 1, 2, . . . when X is discrete and

µ

r

= E[(X − µ

X

)

r

]

=

Z

∞

−∞

(x − µ

X

)

r

f

X

(x) dx

(87)

when X is continuous. The r

th

moment about the mean is only defined if E[(X − µ

X

)

r

] exists.

The r

th

moment about the mean of a random variable X is sometimes called the r

th

central moment

of X. The r

th

central moment of X about a is defined as E[(X −a)

r

].Ifa=µ

X

, we have the r

th

central

moment of X about µ

X

. Note that µ

1

= E[(X −µ

X

)] = 0 and µ

2

= E[(X −µ

X

)

2

] = Var[X]. Also note

that all odd moments of X around its mean are zero for symmetrical distributions, provided such

moments exist.

3.1.3. Alternative formula for the variance.

Theorem 7.

σ

2

X

= µ

0

2

− µ

2

X

(88)

26 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

Proof.

Var( X) ≡ σ

2

X

≡ E

h

(X − E(X))

2

i

≡ E

h

(X − µ

X

)

2

i

≡ E

X

2

− 2 µ

X

X + µ

2

X

= E

X

2

− 2 µ

X

E [X ]+µ

2

X

= E

X

2

− 2 µ

2

X

+ µ

2

X

= E

X

2

− µ

2

X

= µ

0

2

− µ

2

X

(89)

3.2. Moment generating functions.

3.2.1. Definition of a moment generating function. The moment generating function of a random vari-

able X is given by

M

X

(t)=Ee

tX

(90)

provided that the expectation exists for t in some neighborhood of 0. That is, there is an h>0

such that, for all t in −h<t<h, Ee

tX

exists. We can write M

X

(t) as

M

X

( t )=

(

R

∞

−∞

e

tx

f

X

(x) dx if X is continuous

P

x

e

tx

P (X = x) if X is discrete

. (91)

To understand why we call this a moment generating function consider first the discrete case.

We can write e

tx

in an alternative way using a Maclaurin series expansion. The Maclaurin series of

a function f(t) is given by

f(t)=

∞

X

n =0

f

(n)

(0)

n!

t

n

=

∞

X

n=0

f

(n)

(0)

t

n

n !

= f(0) +

f

(1)

(0)

1!

t +

f

(2)

(0)

2!

t

2

+

f

(3)

(0)

3!

t

3

+ ··· +

= f(0) + f

(1)

(0)

t

1!

+ f

(2)

(0)

t

2

2!

+ f

(3)

(0)

t

3

3!

+ ··· +

(92)

where f

(n)

is the n

th

derivative of the function with respect to t and f

(n)

(0) is the n

th

derivative

of f with respect to t evaluated at t = 0. For the function e

tx

, the requisite derivatives are

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 27

de

tx

dt

= xe

tx

,

de

tx

dt

t =0

= x

d

2

e

tx

dt

2

= x

2

e

tx

,

d

2

e

tx

dt

2

t =0

= x

2

d

3

e

tx

dt

3

= x

3

e

tx

,

d

3

e

tx

dt

3

t =0

= x

3

.

.

.

d

j

e

tx

dt

j

= x

j

e

tx

,

d

j

e

tx

dt

j

t =0

= x

j

(93)

We can then write the Maclaurin series as

e

tx

=

∞

X

n =0

d

n

e

tx

dt

n

(0)

t

n

n !

=

∞

X

n =0

x

n

t

n

n !

=1 + tx +

t

2

x

2

2!

+

t

3

x

3

3!

+ ··· +

t

r

x

r

r !

+ ···

(94)

We can then compute E(e

tx

)=M

X

(t) as

E

e

tx

= M

X

(t)=

X

x

e

tx

f

X

(x) (95)

=

X

x

1+tx+

t

2

x

2

2!

+

t

3

x

3

3!

+ ···+

t

rx

r

r!

+ ···

f

X

(x)

=

X

x

f

X

(x)+t

X

x

xf

X

(x)+

t

2

2!

X

x

x

2

f

X

(x)+

t

3

3!

X

x

x

3

f

X

(x)+···+

t

r

r!

X

x

x

r

f

X

(x)+···

=1 + µt + µ

0

2

t

2

2!

+ µ

0

3

t

3

3!

+ ···+ µ

0

r

t

r

r!

+ ···

In the expansion, the coefficient of

t

r

t!

is µ

0

r

, the r

th

moment about the origin of the random

variable X.

3.2.2. Example derivation of a moment generating function. Find the moment-generating function of

the random variable whose probability density is given by

f

X

(x)=

(

e

−x

for x > 0

0 elsewhere

(96)

and use it to find an expression for µ

0

r

. By definition

28 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

M

X

(t)=E

e

tX

=

Z

∞

−∞

e

tx

· e

−x

dx

=

Z

∞

o

e

−x (1 − t)

dx

=

−1

t − 1

e

−x (1 −t)

|

∞

0

=0 −

−1

1 − t

=

1

1 − t

for t < 1

(97)

As is well known, when |t| < 1 the Maclaurin’s series for

1

1 − t

is given by

M

X

(t)=

1

1 − t

=1 + t + t

2

+ t

3

+ ··· + t

r

+ ···

=1 + 1! ·

t

1!

+2!·

t

2

2!

+3!·

t

3

3

!+··· + r! ·

t

r

r!

+ ···

(98)

or we can derive it directly using equation 92. To derive it directly utilizing the Maclaurin series

we need the all derivatives of the function

1

1 − t

evaluated at 0. The derivatives are as follows

f(t)=

1

1 − t

=(1 − t)

− 1

f

(1)

=(1 − t)

− 2

f

(2)

=2(1 − t)

−3

f

(3)

=6(1 − t)

−4

f

(4)

= 24 (1 − t)

−5

f

(5)

= 120 (1 − t)

−6

.

.

.

f

(n)

= n !(1 − t)

(n +1)

.

.

.

(99)

Evaluating them at zero gives

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 29

f(0) =

1

1 − 0

=(1 − 0)

− 1

=1

f

(1)

=(1 − 0)

−2

=1=1!

f

(2)

=2(1 − 0)

−

3

=2=2!

f

(3)

=6(1 − 0)

−4

=6=3!

f

(4)

= 24 (1 − 0)

−5

= 24 = 4!

f

(5)

= 120 (1 − 0)

−6

= 120 = 5!

.

.

.

f

(n)

= n!(1 − 0)

− (n +1)

= n!

.

.

.

(100)

Now substituting in appropriate values for the derivatives of the function f(t)=

1

1 − t

we obtain

f(t)=

∞

X

n =0

f

(n)

(0)

n!

t

n

= f (0) +

f

(1)

(0)

1!

t +

f

(2)

(0)

2!

t

2

+

f

(3)

(0)

3!

t

3

+ ··· +

=1 +

1!

1!

t +

2!

2!

t

2

+

3!

3!

t

3

+ ··· +

=1 + t + t

2

+ t

3

+ ··· +

(101)

A further issue is to determine the radius of convergence for this particular function. Consider

an arbitrary series where the n

th

term is denoted by a

n

. The ratio test says that

If lim

n →∞

a

n +1

a

n

= L<1 , then the series is absolutely convergent (102a)

lim

n →∞

a

n +1

a

n

= L>1 or lim

n →∞

a

n +1

a

n

= ∞, then the series is divergent (102b)

Now consider the n

th

term and the (n+1)

th

term of the Maclaurin series expansion of

1

1 − t

.

a

n

= t

n

lim

n →∞

t

n +1

t

n

= lim

n →∞

|t | = L

(103)

The only way for this to be less than one in absolute value is for the absolute value of t to be less

than one, i.e., |t| < 1. Now writing out the Maclaurin series as in equation 98 and remembering that

in the expansion, the coefficient of

t

r

r!

is µ

0

r

, the r

th

moment about the origin of the random variable

X

30 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

M

X

(t)=

1

1 − t

=1 + t + t

2

+ t

3

+ ··· + t

r

+ ···

=1 + 1! ·

t

1!

+2!·

t

2

2!

+3!·

t

3

3

!+··· + r! ·

t

r

r!

+ ···

(104)

it is clear that µ

0

r

= r! for r = 0, 1, 2, ... For this density function E[X] = 1 because the coefficient of

t

1

1!

is 1. We can verify this by finding E[X] directly by integrating.

E (X)=

Z

∞

0

x · e

−x

dx (105)

To do so we need to integrate by parts with u = x and dv = e

−x

dx. Then du = dx and v = −e

−x

dx.

We then have

E (X)=

Z

∞

0

x · e

−x

dx, u = x, du = dx , v = −e

− x

,dv= e

−x

dx

= − xe

−x

|

∞

0

−

Z

∞

0

−e

− x

dx

=[0 − 0] −

e

− x

|

∞

0

=0 − [0 − 1] = 1

(106)

3.2.3. Moment property of the moment generating functions for discrete random variables.

Theorem 8. If M

X

(t) exists, then for any positive integer k,

d

k

M

X

( t ))

dt

k

t =0

= M

(k)

X

(0) = µ

0

k

. (107)

In other words, if you find the k

th

derivative of M

X

(t) with respect to t and then set t = 0, the

result will be µ

0

k

.

Proof.

d

k

M

X

(t)

dt

k

,orM

(k)

X

(t), is the k

th

derivative of M

X

(t) with respect to t. From equation 95 we

know that

M

X

(t)=E

e

tX

=1+tµ

0

1

+

t

2

2!

µ

0

2

+

t

3

3!

µ

0

3

+ ··· (108)

It then follows that

M

(1)

X

(t)=µ

0

1

+

2 t

2!

µ

0

2

+

3 t

2

3!

µ

0

3

+ ··· (109a)

M

(2)

X

(t)=µ

0

2

+

2 t

2!

µ

0

3

+

3 t

2

3!

µ

0

4

+ ··· (109b)

where we note that

n

n!

=

1

(n − 1)!

. In general we find that

M

( k )

X

( t )=µ

0

k

+

2 t

2!

µ

0

k +1

+

3 t

2

3!

µ

0

k +2

+ ··· . (110)

Setting t = 0 in each of the above derivatives, we obtain

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 31

M

(1)

X

(0) = µ

0

1

(111a)

M

(2)

X

(0) = µ

0

2

(111b)

and, in general,

M

(k)

X

(0) = µ

0

k

(112)

These operations involve interchanging derivatives and infinite sums, which can be justified if

M

X

(t) exists.

3.2.4. Moment property of the moment generating functions for continuous random variables.

Theorem 9. If X has mgf M

X

(t), then

EX

n

= M

(n)

X

(0) , (113)

where we define

M

(n)

X

(0) =

d

n

dt

n

M

X

(t)

t =0

(114)

The nth moment of the distribution is equal to the nth derivative of M

X

(t) evaluated at t = 0.

Proof. We will assume that we can differentiate under the integral sign and differentiate equation

91.

d

dt

M

X

(t)=

d

dt

Z

∞

−∞

e

tx

f

X

(x ) dx

=

Z

∞

−∞

d

dt

e

tx

f

X

(x ) dx

=

Z

∞

−∞

xe

tx

f

X

(x ) dx

= E

Xe

tX

(115)

Now evaluate equation 115 at t = 0.

d

dt

M

X

(t) |

t =0

= E

Xe

tX

t=0

= EX (116)

We can proceed in a similar fashion for other derivatives. We illustrate for n = 2.

32 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

d

2

dt

2

M

X

(t)=

d

2

dt

2

Z

∞

−∞

e

tx

f

X

(x ) dx

=

Z

∞

−∞

d

2

dt

2

e

tx

f

X

(x) dx

=

Z

∞

−∞

d

dt

xe

tx

f

X

(x) dx

=

Z

∞

−∞

x

2

e

tx

f

X

(x) dx

= E

X

2

e

tX

(117)

Now evaluate equation 117 at t = 0.

d

2

dt

2

M

X

(t) |

t =0

= E

X

2

e

tX

t=0

= EX

2

(118)

3.3. Some properties of moment generating functions. If a and b are constants, then

M

X+a

(t)=E

e

(X + a)t

= e

at

· M

X

(t) (119a)

M

bX

(t)=E

e

bXt

= M

X

( bt ) (119b)

MX + a

b

(t)=E

e

(

X+a

b

)

t

= e

a

b

t

· M

X

t

b

(119c)

3.4. Examples of moment generating functions.

3.4.1. Example 1. Consider a random variable with two possible values, 0 and 1, and corresponding

probabilities f(1) = p, f(0) = 1-p where we write f(·) for p(·). For this distribution

M

X

(t)=E

e

tX

= e

t · 1

f (1) + e

t · 0

f (0)

= e

t

p + e

0

(1 − p)

= e

0

(1 − p)+e

t

p

=1 − p + e

t

p

=1 + p

e

t

− 1

(120)

The derivatives are

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 33

M

(1)

X

(t)=pe

t

M

(2)

X

(t)=pe

t

M

(3)

X

(t)=pe

t

.

.

.

M

(k)

X

(t)=pe

t

.

.

.

(121)

Thus

E

X

k

= M

(k)

X

(0) = pe

0

= p (122)

We can also find this by expanding M

X

(t) using the Maclaurin series for the moment generating

function for this problem

M

X

(t)=E

e

tX

=1 + p

e

t

− 1

(123)

To obtain this we first need the series expansion of e

t

. All derivatives of e

t

are equal to e

t

. The

expansion is then given by

e

t

=

∞

X

n =0

d

n

e

t

dt

n

(0)

t

n

n!

=

∞

X

n =0

t

n

n!

=1 + t +

t

2

2!

+

t

3

3!

+ ··· +

t

r

r!

+ ···

(124)

Substituting equation 124 into equation 123 we obtain

M

X

(t)=1+pe

t

− p

=1 + p

1+t +

t

2

2!

+

t

3

3!

+ ··· +

t

r

r !

+ ···

− p

=1 + p + pt + p

t

2

2!

+ p

t

3

3!

+ ··· + p

t

r

r!

+ ··· − p

=1 + pt + p

t

2

2!

+ p

t

3

3!

+ ··· + p

t

r

r!

+ ···

(125)

We can then see that all moments are equal to p. This is also clear by direct computation

34 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

E (X) = (1) p + (0) (1 − p)=p

E

X

2

=(1

2

) p +(0

2

)(1 − p)=p

E

X

3

=(1

3

) p +(0

3

)(1 − p)=p

.

.

.

E

X

k

=(1

k

) p +(0

k

)(1 − p)=p

.

.

.

(126)

3.4.2. Example 2. Consider the exponential distribution which has a density function given by

f

X

(x)=

1

λ

e

−x

λ

, 0 ≤ x ≤∞,λ>0 (127)

For λt <1, we have

M

X

(t)=

Z

∞

0

e

tx

1

λ

e

−x

λ

dx

=

1

λ

Z

∞

0

e

−

(

1

λ

− t

)

x

dx

=

1

λ

Z

∞

0

e

−

(

1 − λt

λ

)

x

dx

=

1

λ

−λ

1 − λt

e

−

(

1 − λt

λ

)

x

|

∞

0

=

−1

1 − λt

e

−

(

1 − λt

λ

)

x

|

∞

0

=0 −

−1

1 − λt

e

0

=

1

1 − λt

(128)

We can then find the moments by differentiation. The first moment is

E(X)=

d

dt

(1 − λt)

−1

t =0

= λ (1 − λt)

−2

t =0

= λ

(129)

The second moment is

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 35

E(X

2

)=

d

2

dt

2

(1 − λt)

−1

t =0

=

d

dt

λ (1 − λt)

−2

t =0

=2λ

2

(1 − λt)

−3

t =0

=2λ

2

(130)

3.4.3. Example 3. Consider the normal distribution which has a density function given by

f(x ; µ, σ

2

)=

1

√

2πσ

2

· e

−1

2

(

x−µ

σ

)

2

(131)

Let g(x) = X - µ, where X is a normally distributed random variable with mean µ and variance

σ

2

. Find the moment-generating function for (X - µ). This is the moment generating function for

central moments of the normal distribution.

M

X

(t)=E[e

t (X − µ)

]=

1

√

2πσ

2

Z

∞

−∞

e

t (x − µ)

e

−1

2

(

x−µ

σ

)

2

dx (132)

To integrate, let u = x - µ. Then du = dx and

M

X

(t)=

1

σ

√

2π

Z

∞

−∞

e

tu

e

− u

2

2σ

2

du

=

1

σ

√

2π

Z

∞

−∞

e

h

tu −

u

2

2 σ

2

i

du

=

1

σ

√

2π

Z

∞

−∞

e

[

1

2 σ

2

(

2 σ

2

tu − u

2

)]

du

=

1

σ

√

2π

Z

∞

−∞

exp

−1

2 σ

2

(u

2

− 2σ

2

tu)

du

(133)

To simplify the integral, complete the square in the exponent of e. That is, write the second term

in brackets as

u

2

− 2σ

2

tu

=

u

2

− 2σ

2

tu + σ

4

t

2

− σ

4

t

2

(134)

This then will give

exp

−1

2σ

2

(u

2

− 2σ

2

tu)

= exp

−1

2σ

2

(u

2

− 2σ

2

tu + σ

4

t

2

− σ

4

t

2

)

= exp

−1

2 σ

2

(u

2

− 2σ

2

tu+ σ

4

t

2

)

·exp

−1

2σ

2

(−σ

4

t

2

)

= exp

−1

2σ

2

(u

2

− 2σ

2

tu + σ

4

t

2

)

· exp

σ

2

t

2

2

(135)

Now substitute equation 135 into equation 133 and simplify. We begin by making the substitu-

tion and factoring out the term exp

h

σ

2

t

2

2

i

.

36 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

M

X

(t)=

1

σ

√

2π

Z

∞

−∞

exp

−1

2 σ

2

(u

2

− 2σ

2

tu)

du

=

1

σ

√

2π

Z

∞

−∞

exp

−1

2 σ

2

(u

2

− 2σ

2

tu+ σ

4

t

2

)

· exp

σ

2

t

2

2

du

= exp

σ

2

t

2

2

1

σ

√

2π

Z

∞

−∞

exp

−1

2 σ

2

(u

2

− 2σ

2

tu+ σ

4

t

2

)

du

(136)

Now move

h

1

σ

√

2π

i

inside the integral sign, take the square root of (u

2

− 2σ

2

tu+ σ

4

t

2

) and

simplify

M

X

(t) = exp

σ

2

t

2

2

Z

∞

−∞

exp

−1

2 σ

2

(u

2

− 2σ

2

tu+ σ

4

t

2

)

σ

√

2π

du

= exp

σ

2

t

2

2

Z

∞

−∞

exp

−1

2 σ

2

(u − σ

2

t )

2

σ

√

2π

du

= e

t

2

σ

2

2

Z

∞

−∞

e

−1

2

h

u−σ

2

t

σ

i

2

σ

√

2π

du

(137)

The function inside the integral is a normal density function with mean and variance equal to

σ

2

t and σ

2

, respectively. Hence the integral is equal to 1. Then

M

X

(t)=e

t

2

σ

2

2

. (138)

The moments of u = x - µ can be obtained from M

X

(t) by differentiating. For example the first

central moment is

E(X − µ )=

d

dt

e

t

2

σ

2

2

t =0

= tσ

2

e

t

2

σ

2

2

t =0

=0

(139)

The second central moment is

E(X − µ )

2

=

d

2

dt

2

e

t

2

σ

2

2

t =0

=

d

dt

tσ

2

e

t

2

σ

2

2

t =0

=

t

2

σ

4

e

t

2

σ

2

2

+ σ

2

e

t

2

σ

2

2

t =0

= σ

2

(140)

The third central moment is

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 37

E(X − µ )

3

=

d

3

dt

3

e

t

2

σ

2

2

t =0

=

d

dt

t

2

σ

4

e

t

2

σ

2

2

+ σ

2

e

t

2

σ

2

2

t

=0

=

t

3

σ

6

e

t

2

σ

2

2

+2tσ

4

e

t

2

σ

2

2

+ tσ

4

e

t

2

σ

2

2

t =0

=

t

3

σ

6

e

t

2

σ

2

2

+3tσ

4

e

t

2

σ

2

2

t =0

=0

(141)

The fourth central moment is

E(X − µ )

4

=

d

4

dt

4

e

t

2

σ

2

2

t =0

=

d

dt

t

3

σ

6

e

t

2

σ

2

2

+3tσ

4

e

t

2

σ

2

2

t =0

=

t

4

σ

8

e

t

2

σ

2

2

+3t

2

σ

6

e

t

2

σ

2

2

+3t

2

σ

6

e

t

2

σ

2

2

+3σ

4

e

t

2

σ

2

2

t =0

=

t

4

σ

8

e

t

2

σ

2

2

+6t

2

σ

6

e

t

2

σ

2

2

+3σ

4

e

t

2

σ

2

2

t =0

=3σ

4

(142)

3.4.4. Example 4. Now consider the raw moments of the normal distribution. The density function

is given by

f(x ; µ, σ

2

)=

1

√

2πσ

2

· e

−1

2

(

x−µ

σ

)

2

(143)

To find the moment-generating function for X we integrate the following function.

M

X

(t)=E [e

tX

]=

1

√

2πσ

2

Z

∞

−∞

e

tx

e

−1

2

(

x−µ

σ

)

2

dx (144)

First rewrite the integral as follows by putting the exponents over a common denominator.

38 RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS

M

X

(t)=E [e

tX

]=

1

√

2πσ

2

Z

∞

−∞

e

tx

e

−1

2

(

x−µ

σ

)

2

dx

=

1

√

2πσ

2

Z

∞

−∞

e

−1

2 σ

2

(x−µ)

2

+ tx

dx

=

1

√

2πσ

2

Z

∞

−∞

e

−1

2 σ

2

( x−µ )

2

+

2 σ

2

tx

2 σ

2

dx

=

1

√

2πσ

2

Z

∞

−∞

e

−1

2 σ

2

[

(x −µ )

2

− 2 σ

2

tx

]

dx

(145)

Now square the term in the exponent and simplify

M

X

(t)=E[e

tX

]=

1

√

2πσ

2

Z

∞

−∞

e

−1

2 σ

2

[

x

2

− 2 µx + µ

2

− 2 σ

2

tx

]

dx

=

1

√

2πσ

2

Z

∞

−∞

e

−1

2 σ

2

[

x

2

− 2 x

(

µ + σ

2

t

)

+ µ

2

]

dx

(146)

Now consider the exponent of e and complete the square for the portion in brackets as follows.

x

2

− 2x

µ + σ

2

t

+ µ

2

= x

2

− 2 x

µ + σ

2

t

+ µ

2

+2µσ

2

t + σ

4

t

2

− 2µσ

2

t − σ

4

t

2

=

x

2

− (µ + σ

2

t)

2

− 2 µσ

2

t − σ

4

t

2

(147)

To simplify the integral, complete the square in the exponent of e by multiplying and dividing

by

e

2 µσ

2

t+σ

4

t

2

2 σ

2

e

−2 µσ

2

t − σ

4

t

2

2 σ

2

=1 (148)

in the following manner

M

X

(t)=

1

√

2πσ

2

Z

∞

−∞

e

−1

2 σ

2

[

x

2

−2x

(

µ+σ

2

t

)

+ µ

2

]

dx

=

e

2µσ

2

t+σ

4

t

2

2σ

2

1

√

2πσ

2

Z

∞

−∞

e

−1

2σ

2

[

x

2

−2x

(

µ+σ

2

t

)

+µ

2

]

e

− 2µσ

2

t−σ

4

t

2

2 σ

2

dx

=

e

2µσ

2

t+σ

4

t

2

2σ

2

1

√

2πσ

2

Z

∞

−∞

e

−1

2σ

2

[

x

2

−2x

(

µ+σ

2

t

)

+µ

2

+2µσ

2

t+σ

4

t

2

]

dx

(149)

Now find the square root of

x

2

− 2x

µ + σ

2

t

+ µ

2

+2µσ

2

t + σ

4

t

2

(150)

Given we would like to have (x − something)

2

, try squaring x − (µ + σ

2

t) as follows

x − (µ + σ

2

t)

= x

2

− 2

x(µ + σ

2

t)

+

µ + σ

2

t

2

= x

2

− 2x

µ − σ

2

t

+ µ

2

+2µσ

2

t + σ

4

t

2

(151)

So

x − (µ + σ

2

t)

is the square root of x

2

− 2x

µ − σ

2

t

+ µ

2

+2µσ

2

t + σ

4

t

2

. Making the

substitution in equation 149 we obtain

RANDOM VARIABLES AND PROBABILITY DISTRIBUTIONS 39

M

X

(t)=

e

2µσ

2

t+σ

4

t

2

2

σ

2

1

√

2πσ

2

Z

∞

−∞

e

−

1

2

σ

2

[

x

2

−2x

(

µ+σ

2

t

)

+µ

2

+2µσ

2

t+σ

4

t

2

]

dx

=

e

2µσ

2

t+σ

4

t

2

2

σ

2

1

√

2πσ

2

Z

∞

−∞

e

−1

2

σ

2

([

x−(µ+σ

2

t)

])

(152)

The expression to the right of e

2µσ

2

t+σ

4

t

2

2 σ

2

is a normal density function with mean and variance

equal to µ + σ

2

t and σ

2

, respectively. Hence the integral is equal to 1. Then

M

X

(t)=

e

2µσ

2

t+σ

4

t

2

2σ

2

= e

µt+

t

2

σ

2

2

. (153)

The moments of X can be obtained from M

X

(t) by differentiating with respect to t. For example

the first raw moment is

E(X)=

d

dt

e

µt +

t

2

σ

2

2

t =0

=(µ + tσ

2

)

e

µt+

t

2

σ

2

2

t =0

= µ

(154)

The second raw moment is

E(x

2

)=

d

2

dt

2

e

µt +

t

2

σ

2

2

t=0

=

d

dt

µ + tσ

2

e

µt+

t

2

σ

2

2

t=0

=

µ + tσ

2

2

e

µt+

t

2

σ

2

2

+ σ

2

e

µt+

t

2

σ

2

2

t =0

= µ

2

+ σ

2

(155)

The third raw moment is

E(X

3

)=

d

3

dt

3

e

µt+

t

2

σ

2

2

t=0